Documentation Index

Fetch the complete documentation index at: https://docs.datafold.com/llms.txt

Use this file to discover all available pages before exploring further.

PREREQUISITES

- Create a Data Connection Integration where your dbt project data is built.

- Create a Code Repository Integration where your dbt project code is stored.

Getting started

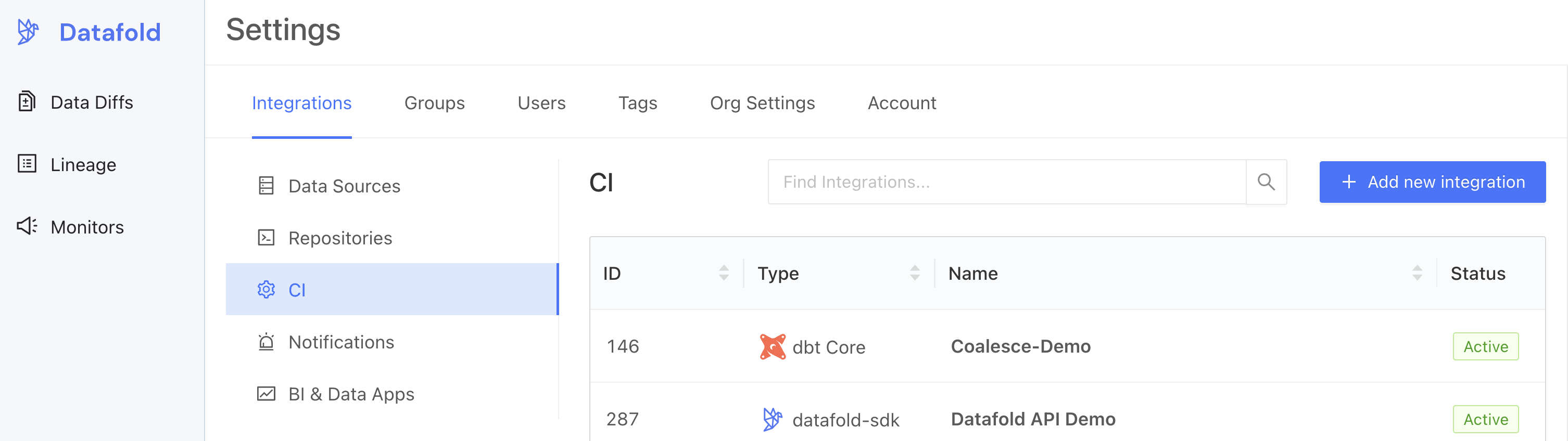

To add Datafold to your continuous integration (CI) pipeline using dbt Core, follow these steps:1. Create a dbt Core integration.

2. Set up the dbt Core integration.

Complete the configuration by specifying the following fields:Basic settings

| Field Name | Description |

|---|---|

| Configuration name | Choose a name for your for your Datafold dbt integration. |

| Repository | Select your dbt project. |

| Data Connection | Select the data connection your dbt project writes to. |

| Primary key tag | Choose a string for tagging primary keys. |

Advanced settings: Configuration

| Field Name | Description |

|---|---|

| Import dbt tags and descriptions | Import dbt metadata (including column and table descriptions, tags, and owners) to Datafold. |

| Slim Diff | Data diffs will be run only for models changed in a pull request. See our guide to Slim Diff for configuration options. |

| Diff Hightouch Models | Run Data Diffs for Hightouch models affected by your PR. |

| CI fails on primary key issues | The existence of null or duplicate primary keys will cause CI to fail. |

| Pull Request Label | When this is selected, the Datafold CI process will only run when the datafold label has been applied. |

| CI Diff Threshold | Data Diffs will only be run automatically for a given CI run if the number of diffs doesn’t exceed this threshold. |

| Branch commit selection strategy | Select “Latest” if your CI tool creates a merge commit (the default behavior for GitHub Actions). Choose “Merge base” if CI is run against the PR branch head (the default behavior for GitLab). |

| Custom base branch | If defined, CI will run only on pull requests with the specified base branch. |

| Columns to ignore | Use standard gitignore syntax to identify columns that Datafold should never diff for any table. This can improve performance for large datasets. Primary key columns will not be excluded even if they match the pattern. |

| Files to ignore | If at least one modified file doesn’t match the ignore pattern, Datafold CI diffs all changed models in the PR. If all modified files should be ignored, Datafold CI does not run in the PR. (Additional details.) |

Advanced settings: Sampling

Sampling allows you to compare large datasets more efficiently by checking only a randomly selected subset of the data rather than every row. By analyzing a smaller but statistically meaningful sample, Datafold can quickly estimate differences without the overhead of a full dataset comparison. To learn more about how sampling can result in a speedup of 2x to 20x or more, see our best practices on sampling.| Field Name | Description |

|---|---|

| Enable sampling | Enable sampling for data diffs to optimize analyzing large datasets. |

| Sampling tolerance | The tolerance to apply in sampling for all data diffs. |

| Sampling confidence | The confidence to apply when sampling. |

| Sampling threshold | Sampling will be disabled automatically if tables are smaller than specified threshold. If unspecified, default values will be used depending on the Data Connection type. |

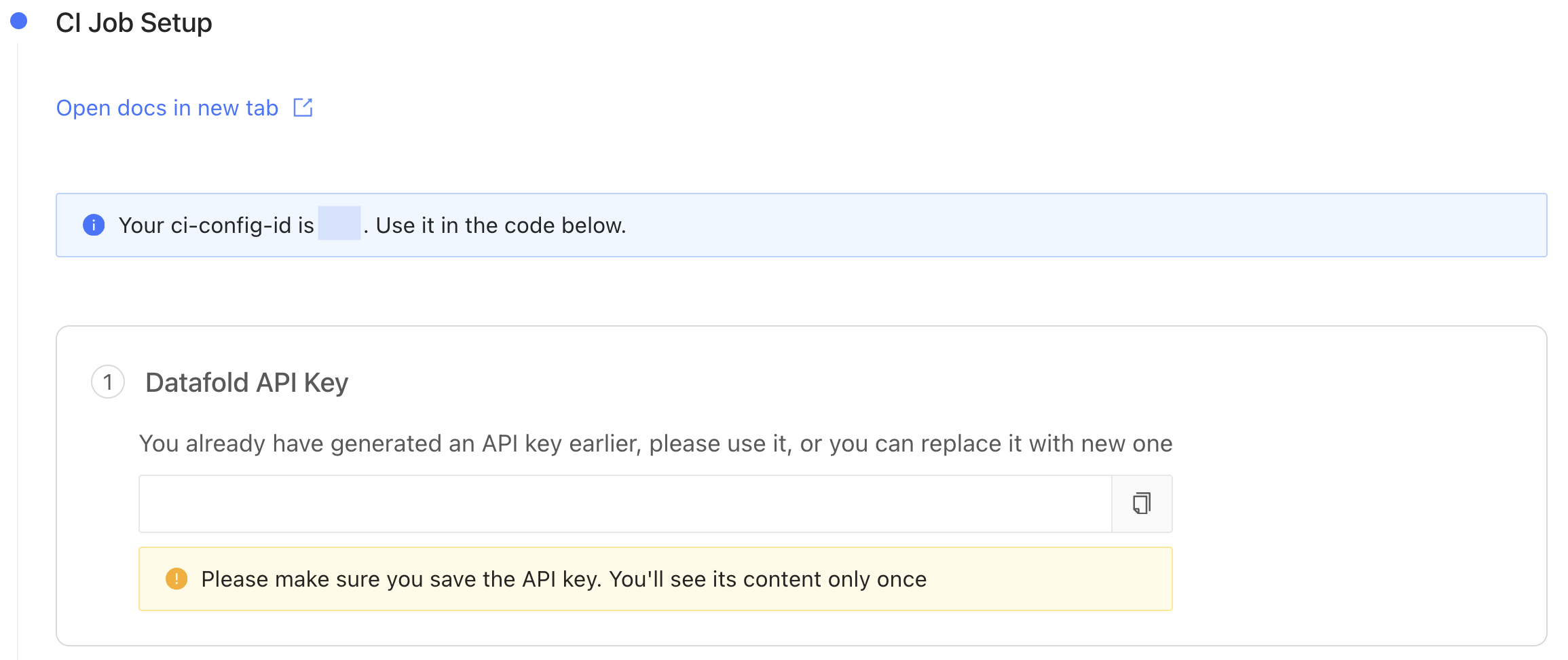

3. Obtain an Datafold API Key and CI config ID.

After saving the settings in step 2, scroll down and generate a new Datafold API Key and obtain the CI config ID.

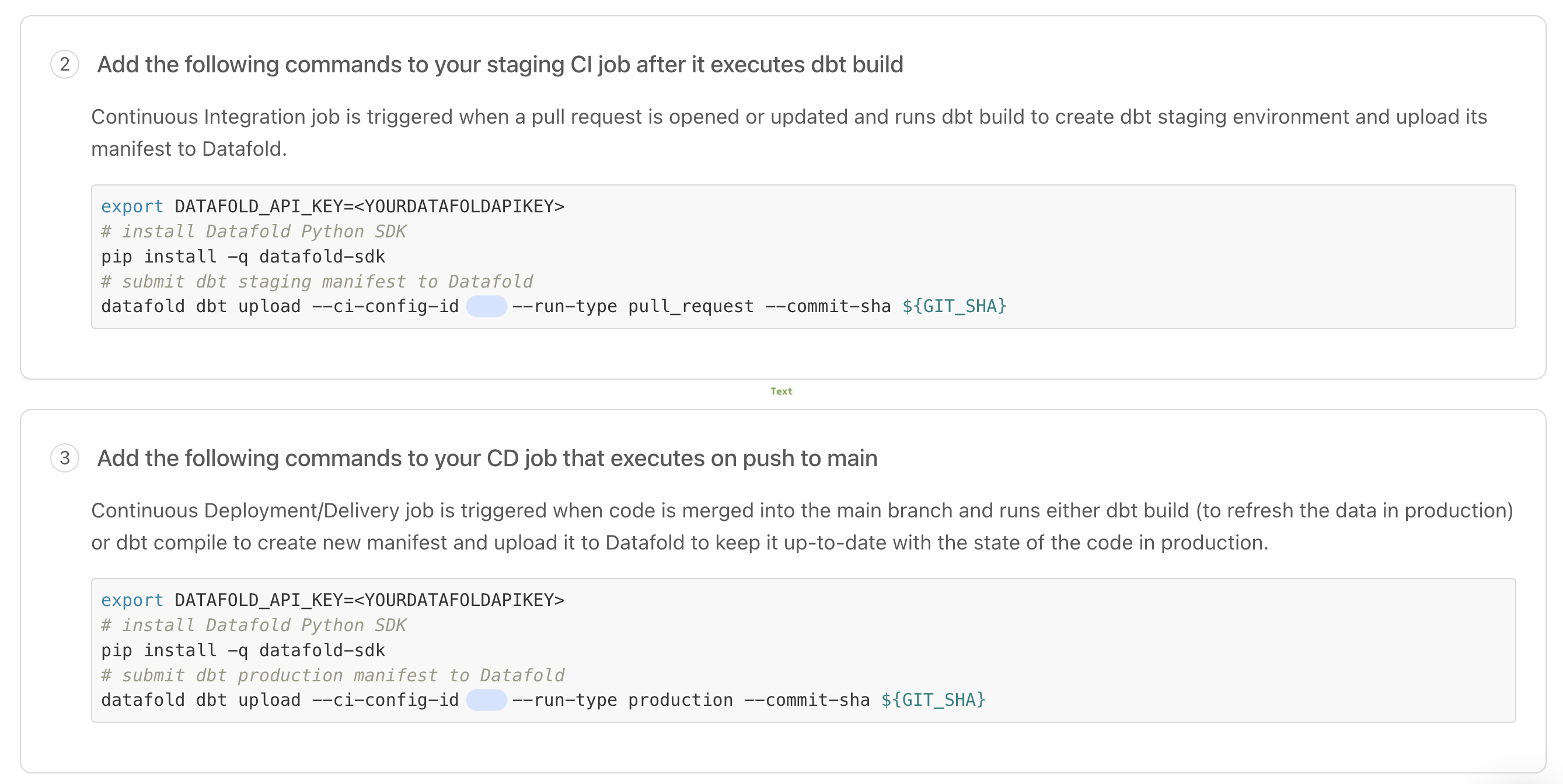

4. Configure your CI script(s) with the Datafold SDK.

Using the Datafold SDK, configure your CI script(s) to upload dbtmanifest.json files.

The datafold dbt upload command takes this general form and arguments:

manifest.json files in 2 scenarios:

- On merges to main. These

manifest.jsonfiles represent the state of the dbt project on the base/production branch from which PRs are created. - On updates to PRs. These

manifest.jsonfiles represent the state of the dbt project on the PR branch.

manifest.json files, Datafold determines which dbt models to diff in a CI run.

Implementation details vary depending on which CI tool you use. Please review these instructions and examples to help you configure updates to your organization’s CI scripts.

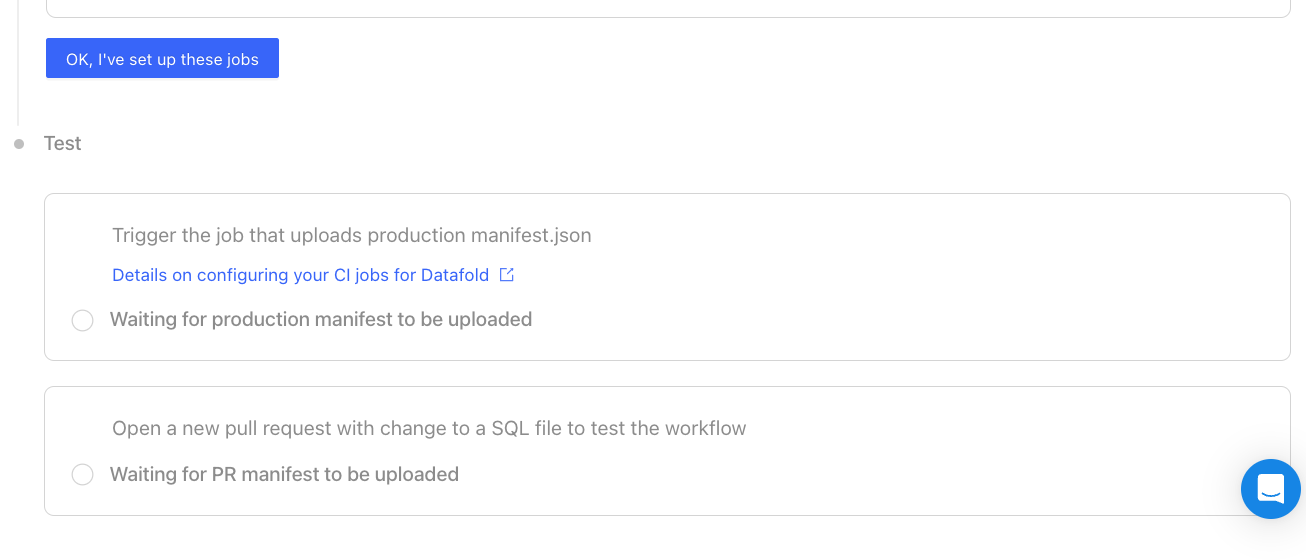

5. Test your dbt Core integration.

After updating your CI scripts, trigger jobs that will uploadmanifest.json files represent the base/production state.

Then, open a new pull request with changes to a SQL file to trigger a CI run.

CI implementation tools

We’ve created guides and templates for three popular CI tools. To add Datafold to your CI tool, adddatafold dbt upload steps in two CI jobs:

- Upload Production Artifacts: A CI job that build a production

manifest.json. This can be either your Production Job or a special Artifacts Job that runs on merge to main (explained below). - Upload Pull Request Artifacts: A CI job that builds a PR

manifest.json.

manifest.json files, enabling us to run data diffs comparing production data to dev data.

- GitHub Actions

- CircleCI

- GitLab CI

Upload Production ArtifactsAdd the Artifacts JobIf your existing Production Job runs on a schedule and not on merges to the base branch, create a dedicated job that runs on merges to the base branch which generates and uploads a Pull Request ArtifactsInclude the Store Datafold API KeySave the API key as

datafold dbt upload step to either your Production Job or an Artifacts Job.Production JobIf your dbt prod job kicks off on merges to the base branch, add a datafold dbt upload step after the dbt build step.manifest.json file to Datafold.datafold dbt upload step in your CI job that builds PR data.DATAFOLD_API_KEY in your GitHub repository settings.CI for dbt multi-projects

When setting up CI for dbt multi-projects, each project should have its own dedicated CI integration to ensure that changes are validated independently.CI for dbt multi-projects within a monorepo

When managing multiple dbt projects within a monorepo (a single repository), it’s essential to configure individual Datafold CI integrations for each project to ensure proper isolation. This approach prevents unintended triggering of CI processes for projects unrelated to the changes made. Here’s the recommended approach for setting it up in Datafold: 1. Create separate CI integrations: Create separate CI integrations within Datafold, one for each dbt project within the monorepo. Each integration should be configured to reference the same GitHub repository. 2. Configure file filters: For each CI integration, define file filters to specify which files should trigger the CI run. These filters prevent CI runs from being initiated when files from other projects in the monorepo are updated. 3. Test and validate: Before deployment, test each CI integration to validate that it triggers only when changes occur within its designated dbt project. Verify that modifications to files in one project do not inadvertently initiate CI processes for unrelated projects in the monorepo.Advanced configurations

Skip Datafold in CI

To skip the Datafold step in CI, include the stringdatafold-skip-ci in the last commit message.